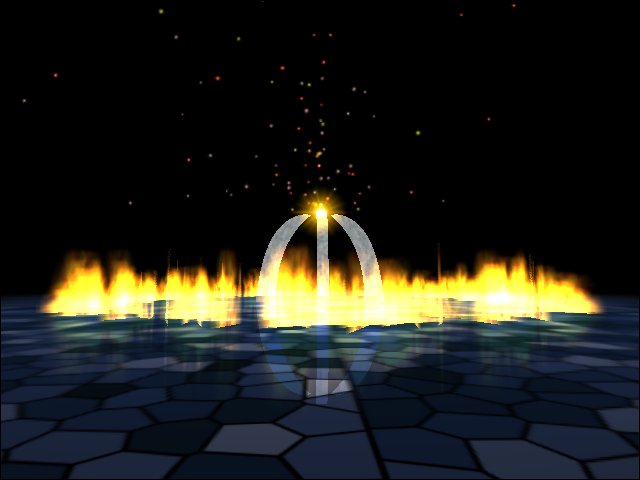

First I'd like to wish you all the best for the new year, and hope that you are able to enjoy the last year of the millenium (I had to fit that word in somewhere, even though it's one year too early ;) I've had the chance to spend some quality development time on a TNT2 system over the last 2 weeks, and believe me, compared to programming a for a Voodoo2 this is heaven ;) Unfortunately, I haven't done half as much work as I hoped I would. The most important area on which I focused my attention was generic shaders, and their rendering capabilities with hardware acceleration. Basically these things are so powerfull that once you've got them working, the only limit is your ability to describe the surface texture you wish to render. For those of you that don't know what shaders are, I'll briefly go over the basics. What are Shaders?  A shader is usually a small script, or a piece of code that tells the rendering engine how to draw the texture on a given surface. Now this is a very generic definition, and can be applied to pretty much all rendering techniques. For example most common ray-tracers support parametric surface texture description in some way or another, but this is usually extremely low level by controling the parameters of some predefined noise functions (like the keyword "bozo" in POV-RAY). Q3A also uses shaders, and that's why there's a lot of buzz about them at the moment. I'd expect most future games to implement them now, simply because id Software did it. And that's why I got into them ;) Shaders Hardware Acceleration  Using shaders with hardware acceleration is much higher level than for ray tracing. Usually, you draw some textures before hand, and you use the shader script to define how to blend them together. To do this in Q3A, a special scripting language was defined that handle some very basic cases (all blending types, some simple texture coordinate generation functions, basic colour generation functions and various other parameters used in the rendering). Of course, you can combine these at will, which makes them that much more effective. The "combining" is done via layers. Each layer has a different texture, colours, texture coordinates, and alpha coefficients. For example, you draw a lightmap into the frame buffer, then blend in a second layer using GL_DST_COLOR / GL_ZERO . The third layer will be blended with whatever is in the frame buffer after the second blending. When Q3A was under development, I thought that the challenge here was to make sure that multi-texturing was used as much as possible. But in effect, I get the impression the engine always does single passes, which is a bit of a shame... Ok admitedly, you can't do many different types of blending with multi-texturing, but i'm still convinced there would be a speed gain. For example, if 3 layers of detail textures are used with a lightmap, this can be drawn as 2 double passes or one quad pass in GL_MODULATE mode. I'll give that a go when I get more time, and keep you informed. Interpreting Simple Shader Scripts  One way of doing things is to define a series of given operations you can perform for each layer, and create a series of opcodes for each operation. So as you read in your shader script, you would generate corresponding byte code by storing these opcodes and their operands in memory. Then every time you re-tessellate your surfaces, or every time you draw them (depending on whether you cache everything or not), you would interpret the given byte code. The speed at which this is done is acceptable, but not the technique is not very generic. One advantage is safety, since you can only render what the engine was taught to render. Another advantage is that anyone can create shader scripts, as long as they have a copy of the game / engine. Hardcoding Shader Procedures  My main reason for coding some sample shaders was to texture my landscapes. I quickly found that being limited to a set of predefined procedures would be a pain. This could be fixed by having a larger set of operations, but this would be tedious to implement and possibly overkill, since some operations may never be used at all. So I realised what I really needed for each layer was a way of creating a procedure that would fill my colour array and texture coord array. I could do this by compiling some source code at runtime, but this would involve writting a whole compiler, but i'm not too keen on that idea. An easy way out would be to use hardcoded functions, that could be stored in a DLL and loaded at runtime. The user would however need a compiler and possibly the headers of the engine, depending on how much control he wishes is shader to have. Another major disadvantage is safety: the engine can't check the content of the hardcoded procedures, so it could format the HD without us noticeing. On the other hand, this would be extremely quick, completely generic, simple to implement and no useless code would be written. I've used this technique so far, and beta tested it by converting my old 'Circle of Fire' effect to use this shader.  The floor is a bilinear (2x2) bezier patch, with one layer and a lightmap, the columns are linear-quadratic (2x3) patches with a single texture, and the flames are animated textures on polygons. There are still a depth related clipping bugs (flames are visible when they should be hidden), but nothing to do with the shader. Final Words  I'm still not sure what I'll implement in the end. I may have to settle for limited operations and interpreted code. This would be enough to implement my perlin noise landscape texturer, but wouldn't be generic. If you have any thoughts on the topic, start a thread in the discussion board, or email me. I won't have much time in the near future to try out more implementation ideas, since my exams are looming, and I have to cram a lot of definitions for my database module... bah!! I hate it when common sense it not enough ;) Best Wishes, Alex

|